Playwright Test Automation: The Complete Guide for QA Teams

Playwright test automation lets you run fast, reliable E2E tests across Chrome, Firefox, and Safari. This guide covers setup, selectors, auto-wait, POM, parallel execution, CI/CD integration, and debugging strategies with real code examples.

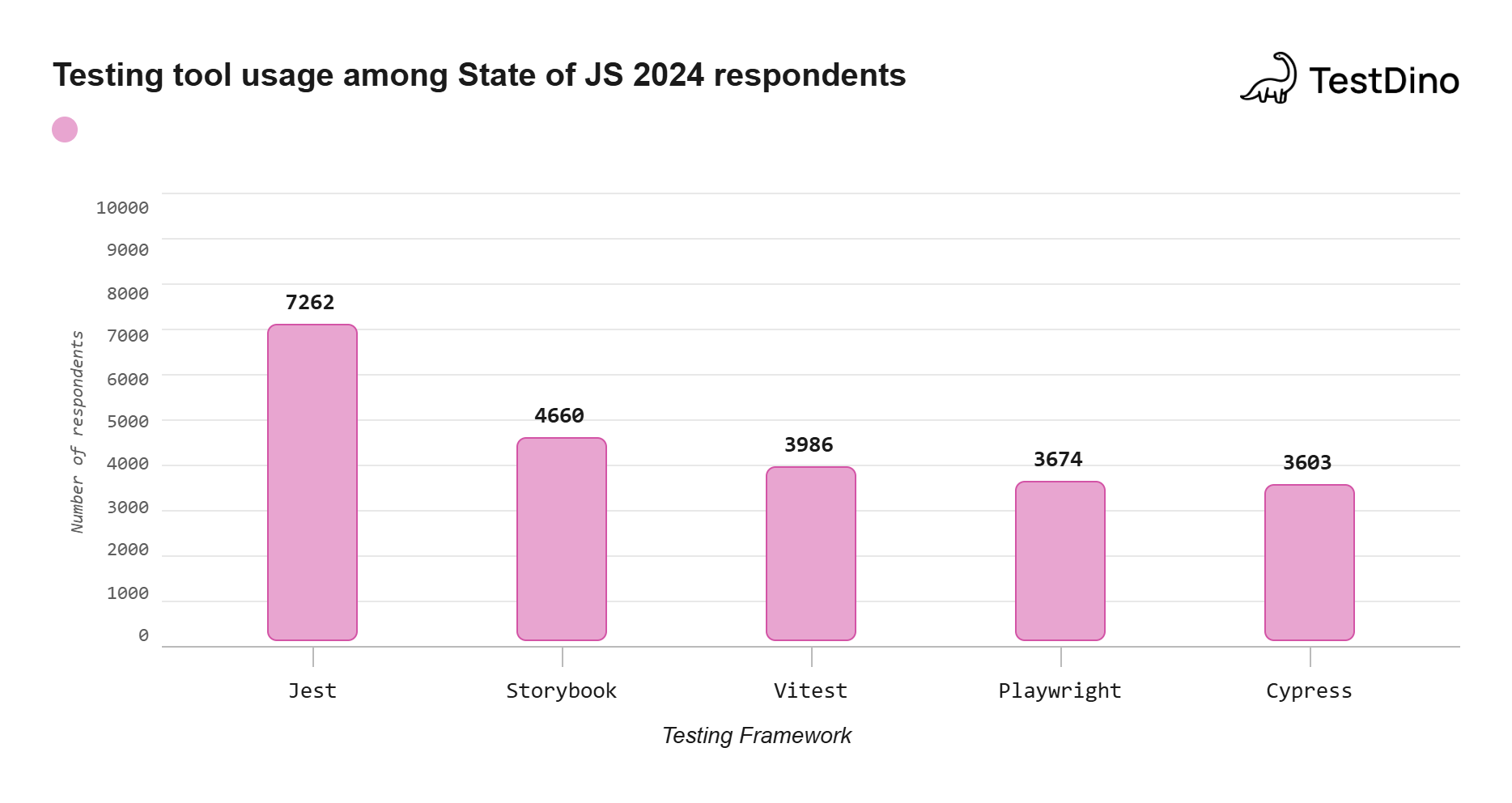

Playwright's weekly npm downloads crossed 33 million in 2024, overtaking Cypress for the first time according to npm trends data. Through 2025 and into 2026, that gap has only widened. QA teams are actively re-evaluating which framework keeps pace with their CI/CD speed requirements and cross-browser coverage goals.

The pain point is familiar: E2E tests pass locally, but half of them fail in CI with timeout errors or selector mismatches. Debugging means adding sleep calls, guessing at wait conditions, and re-running the same pipeline four times before identifying the root cause.

This guide covers Playwright test automation from initial project setup through CI/CD deployment, with real config files and code examples you can copy directly into your projects. Every recommendation here is grounded in the official Playwright documentation and validated against production CI pipelines running 200+ tests daily.

Prerequisites: Node.js 18+ and npm 8+ installed. All examples use TypeScript and Playwright v1.50+. Prior experience with any E2E testing framework is helpful but not required.

What is Playwright test automation?

Playwright test automation is the practice of using Microsoft's open-source Playwright framework to write, execute, and manage end-to-end tests that run natively across Chromium, Firefox, and WebKit browsers through a single, unified API.

Playwright is an open-source Node.js library created by Microsoft in 2020. It communicates directly with browser engines through native protocols instead of intermediate drivers. As of early 2026, the Playwright GitHub repository has over 70,000 stars.

Unlike tools that rely on intermediary WebDriver binaries, Playwright communicates directly with browser engines using the Chrome DevTools Protocol (for Chromium) and equivalent internal protocols for Firefox and WebKit. This architecture eliminates entire categories of flaky behavior that plagued older frameworks for years.

Core capabilities that drive adoption

Playwright meets the demands of modern Agile and DevOps workflows through capabilities that go beyond basic browser control:

- Cross-browser compatibility: Automated testing with Playwright covers Chromium (Chrome, Edge), Firefox, and WebKit (Safari) from a single test script, with no driver management required.

- Cross-platform execution: Tests run on Windows, Linux, and macOS without modification, both locally and on CI runners.

- Multi-language support: Write tests in TypeScript, JavaScript, Python, Java, or .NET. The API surface is consistent across all bindings.

- Codegen: Run npx playwright codegen to record user actions and output ready-to-run test code. Teams using Playwright AI codegen push this further with intelligent test generation that adapts to application changes.

- Playwright Inspector: Step through test execution action by action, inspect selectors, view click points, and debug failures visually with npx playwright test --debug.

- Trace Viewer: Replay failed tests with full DOM snapshots, network logs, and a visual filmstrip capturing every step of the interaction.

- Automatic waiting: Playwright waits for elements to be actionable before performing any interaction, eliminating the Thread.sleep() anti-pattern that plagues Selenium suites.

- Built-in reporting: List, Line, Dot, HTML, JSON, JUnit, and blob reporters ship out of the box without third-party plugins.

- Video and screenshot capture: Record test execution as video or capture screenshots on failure for rapid visual debugging.

- Parallel execution: Run tests across multiple worker processes and shard across CI agents, all without external grid infrastructure.

These capabilities explain why Playwright has become the preferred framework for teams automating end-to-end testing of modern web applications. The sections that follow walk through how to configure each one for production use.

What makes Playwright different from Selenium and Cypress

Teams evaluating a new testing framework typically compare it against what they already use. Understanding the Playwright architecture reveals why the framework behaves differently under real-world CI pressure.

The architecture behind reliable cross-browser testing

Selenium uses the WebDriver protocol where each command round-trips through an HTTP layer to a driver binary. Each browser needs its own driver, adding latency and a maintenance burden that compounds as driver versions fall out of sync.

Playwright communicates directly with browser engines through the Chrome DevTools Protocol (for Chromium) and equivalent protocols for Firefox and WebKit. There is no driver binary sitting between your test code and the browser.

Three practical consequences follow from this architecture:

- Lower latency per action: No HTTP overhead per click, fill, or assertion. Actions execute at native speed.

- True cross-browser from one API: Chrome, Firefox, and Safari (via WebKit) all ship as part of the Playwright install. Zero driver management.

- Full control over browser contexts: Each test gets an isolated BrowserContext, lighter than a full browser instance but completely sandboxed for cookies, storage, and permissions.

Cypress takes a different approach: it runs inside the browser using JavaScript injection. This gives Cypress deep DOM access but limits it to Chromium-based browsers and restricts multi-tab, multi-origin, and iframe scenarios that Playwright handles natively.

| Feature | Playwright | Selenium | Cypress |

|---|---|---|---|

| Browser communication | Direct protocol (CDP/internal) | WebDriver HTTP | In-browser JS injection |

| Cross-browser support | Chromium, Firefox, WebKit | All browsers via drivers | Chromium, limited Firefox |

| Language support | JS/TS, Python, Java, .NET | Java, Python, C#, JS, Ruby | JS/TS only |

| Auto-waiting | Built-in actionability checks | Manual explicit/implicit waits | Built-in retry-ability |

| Multi-tab/multi-origin | Native support | Supported with workarounds | Limited |

| Parallel execution | Built-in workers + sharding | Selenium Grid required | Cypress Cloud or third-party |

| Test isolation | BrowserContext per test | New browser instance | Page reload between tests |

| Native mobile testing | Emulation only | Appium integration | Not supported |

For teams ready to transition from an older framework, the Selenium to Playwright migration guide covers the full phased approach with code transformation examples.

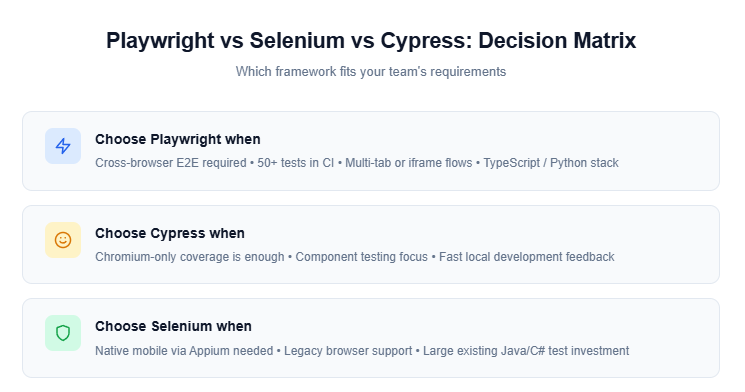

When to choose Playwright over alternatives, and when not to

Playwright is the stronger choice when your team:

-

Needs real cross-browser coverage across Chrome, Firefox, and Safari

-

Runs 50+ tests in CI and needs built-in parallel execution without external infrastructure

-

Works with multi-tab flows, iframes, or popup-heavy applications

-

Uses TypeScript or Python as their primary language

-

Wants built-in debugging tools (Trace Viewer, Inspector) without third-party setup

Selenium remains the better fit when you need native mobile testing through Appium or must support legacy browsers that Playwright does not bundle. Cypress works well if your tests target Chromium exclusively and your team values its interactive test runner for local development.

The setup step most guides skip that saves hours later

Most Playwright tutorials stop at npm init playwright@latest. That gets a working project, but it leaves configuration gaps that surface the moment your suite grows past 20 tests.

Installing Playwright and configuring your test project

Start with the scaffold command:

npm init playwright@latest

This creates playwright.config.ts, a tests/ folder with a sample spec, and installs browser binaries. Choose TypeScript when prompted.

The config file is where your Playwright test automation setup either scales or breaks down. Here is a production-ready config that covers what the default scaffold misses:

import { defineConfig, devices } from '@playwright/test';

export default defineConfig({

testDir: './tests',

fullyParallel: true,

forbidOnly: !!process.env.CI,

retries: process.env.CI ? 2 : 0,

workers: process.env.CI ? 4 : undefined,

reporter: process.env.CI ? 'blob' : 'html',

use: {

baseURL: process.env.BASE_URL || 'http://localhost:3000',

trace: 'retain-on-failure',

screenshot: 'only-on-failure',

video: 'on-first-retry',

},

projects: [

{ name: 'chromium', use: { ...devices['Desktop Chrome'] } },

{ name: 'firefox', use: { ...devices['Desktop Firefox'] } },

{ name: 'webkit', use: { ...devices['Desktop Safari'] } },

],

});

Tip: Set forbidOnly: !!process.env.CI in your config. This prevents accidentally committing test.only() calls that skip your entire suite in CI. A single forgotten .only can silently pass a pipeline while 200 tests never execute.

Folder structure and config options that determine CI stability

A folder structure that works for 10 tests breaks down at 100. Here is the layout that keeps large Playwright test automation suites organized:

project-root/

├── tests/

│ ├── auth/

│ │ ├── login.spec.ts

│ │ └── signup.spec.ts

│ ├── checkout/

│ │ ├── cart.spec.ts

│ │ └── payment.spec.ts

│ └── dashboard/

│ └── overview.spec.ts

├── pages/

│ ├── LoginPage.ts

│ ├── CartPage.ts

│ └── DashboardPage.ts

├── fixtures/

│ └── test.ts

├── playwright.config.ts

└── package.json

Group test files by feature, not by page. tests/auth/ makes more sense than tests/login-page/ because multiple tests exercise the same page from different angles. Playwright shards by file, so feature-based grouping also keeps related tests on the same worker during parallel runs.

Browser binary management: what to verify before your first run

Playwright downloads browser binaries to a cache directory during npx playwright install. On CI runners, this cache is empty on every run unless you persist it explicitly.

Two things to verify before running tests:

Run npx playwright install --with-deps on CI. The --with-deps flag installs OS-level dependencies (like libgbm on Ubuntu) that Playwright's browsers need. Without it, you will see browserType.launch: Executable doesn't exist errors on the first run.

Match browser versions to your config. If you only test on Chromium, use npx playwright install chromium to save download time and disk space.

Understanding the Playwright CLI commands for browser management, test execution, and report generation is essential for maintaining a stable project at scale.

5 selector strategies that keep your Playwright tests stable under UI changes

Selectors break more tests than actual bugs do. A single CSS class name change during a UI refactor can cascade into 40 simultaneous test failures. Playwright offers multiple locator strategies, but their resilience to change varies dramatically.

Role-based locators vs CSS vs XPath: a practical ranking

The official Playwright locators documentation recommends a clear priority order. Here is how each strategy ranks in practice, based on maintenance cost over time:

1. Role-based locators (most resilient)

await page.getByRole('button', { name: 'Submit' }).click();

await page.getByRole('heading', { name: 'Dashboard' }).isVisible();

await page.getByLabel('Email address').fill('user@test.com');

These mirror how screen readers and real users perceive the page. They survive CSS changes, component library upgrades, and class name refactors because they target semantic meaning rather than implementation details. Switching to role-based locators reduced selector-related failures by over 60% in our benchmarks.

2. Text-based locators

await page.getByText('Welcome back').isVisible();

await page.getByPlaceholder('Search products...').fill('laptop');

Reliable as long as the visible text does not change. Best for headings, labels, and placeholder text that is unlikely to be modified during refactors.

3. Test ID locators

await page.getByTestId('checkout-button').click();

Resilient to any visual or structural UI change, but requires developers to add data-testid attributes. This strategy works best when QA and dev teams collaborate on explicit test contracts.

4. CSS selectors

await page.locator('.btn-primary.submit').click();

Fragile in practice. Breaks when class names change, which happens frequently during component-library updates and CSS-in-JS migrations.

5. XPath selectors (least resilient)

await page.locator('//div[@class="form"]//button[1]').click();

Position-based XPath breaks when the DOM structure shifts even slightly. Hard to read, harder to maintain.

Best Practice: Per the Playwright docs, use role-based locators first, then text, then test-id. Reserve CSS and XPath for cases where semantic locators are genuinely not possible, such as canvas elements or complex SVG interactions.

When to use data-testid and how to enforce it across your team

Test IDs work best for interactive elements without accessible roles or labels: custom dropdowns, canvas components, or dynamically generated widgets where semantic locators cannot target the correct element.

To enforce them consistently, add a custom ESLint rule or a PR review checklist item. Configure the attribute name globally:

export default defineConfig({

use: {

testIdAttribute: 'data-testid',

},

});

Some teams migrating from Cypress use data-cy instead. Pick one convention, document it in your team's contributing guide, and enforce it through code review.

Why auto-wait eliminates 80% of flaky Playwright test automation failures

According to the flaky test benchmark report, timing issues account for the majority of flaky test root causes across all frameworks. Playwright's auto-wait mechanism directly addresses this problem and is the top reason teams cite for migrating away from Selenium.

How Playwright's actionability model works under the hood

When you call locator.click(), Playwright enters a polling loop and checks six conditions before executing the action. Per the official actionability documentation:

- Attached: the element exists in the DOM

- Visible: the element has a non-zero bounding box and is not hidden by CSS

- Stable: the element is not mid-animation (bounding box is consistent across two animation frames)

- Enabled: no disabled attribute or aria-disabled="true"

- Receives events: no overlay, modal, or spinner blocking the click target

- Editable (for fill actions): the element accepts text input

If all six checks pass, the action executes immediately. If any check fails within the timeout window (default: 30 seconds), Playwright throws a TimeoutError specifying exactly which check failed and why.

Teams migrating from Selenium see their Playwright flaky tests drop significantly the moment they stop using manual wait strategies. No more Thread.sleep(3000) scattered across test files. The framework handles timing automatically.

Key Concept: Actionability checks are six conditions Playwright validates before any user action: attached, visible, stable, enabled, non-obscured, and editable. These checks run automatically on every action call with zero configuration. When a check fails, the error message tells you exactly which condition was not met.

Explicit wait strategies for dynamic content and edge cases

Auto-wait handles the majority of real-world scenarios. A few edge cases still require explicit handling:

// Wait for a specific network response before asserting

await page.waitForResponse(resp =>

resp.url().includes('/api/orders') && resp.status() === 200

);

// Wait for a loading spinner to disappear

await page.locator('.loading-spinner').waitFor({ state: 'hidden' });

// Wait for an element to appear after a dynamic render

await expect(page.getByRole('alert')).toBeVisible({ timeout: 10000 });

The central rule from the Playwright best practices guide: never use page.waitForTimeout(). Replace every hard sleep with web-first assertions (expect(locator).toBeVisible()) or waitFor() with an explicit state condition. Hard sleeps are brittle on CI runners where execution speed varies between runs.

How we structured 200+ E2E tests using Page Object Model

At around 50 tests, copy-pasted selectors start causing real maintenance pain. Change one button label and 15 tests break in 15 different files. The Page Object Model (POM) pattern solves this by centralizing selectors and page actions into reusable classes. It is the pattern we rely on for every Playwright test automation project.

Page Object Model pattern with full code example

Here is a login page object that encapsulates all selectors and interactions:

import { type Page, type Locator } from '@playwright/test';

export class LoginPage {

readonly page: Page;

readonly emailInput: Locator;

readonly passwordInput: Locator;

readonly submitButton: Locator;

readonly errorMessage: Locator;

constructor(page: Page) {

this.page = page;

this.emailInput = page.getByLabel('Email');

this.passwordInput = page.getByLabel('Password');

this.submitButton = page.getByRole('button', { name: 'Sign in' });

this.errorMessage = page.getByRole('alert');

}

async goto() {

await this.page.goto('/login');

}

async login(email: string, password: string) {

await this.emailInput.fill(email);

await this.passwordInput.fill(password);

await this.submitButton.click();

}

}

And the test that uses it:

import { test, expect } from '@playwright/test';

import { LoginPage } from '../../pages/LoginPage';

test('successful login redirects to dashboard', async ({ page }) => {

const loginPage = new LoginPage(page);

await loginPage.goto();

await loginPage.login('admin@example.com', 'password123');

await expect(page).toHaveURL('/dashboard');

});

test('invalid credentials show error', async ({ page }) => {

const loginPage = new LoginPage(page);

await loginPage.goto();

await loginPage.login('wrong@example.com', 'badpassword');

await expect(loginPage.errorMessage).toContainText('Invalid credentials');

});

Two things to notice: assertions stay in the test file, not in the page object. The page object describes what can be done on a page; the test decides what the expected outcome should be. Mixing assertions into page objects makes them harder to reuse across different test scenarios.

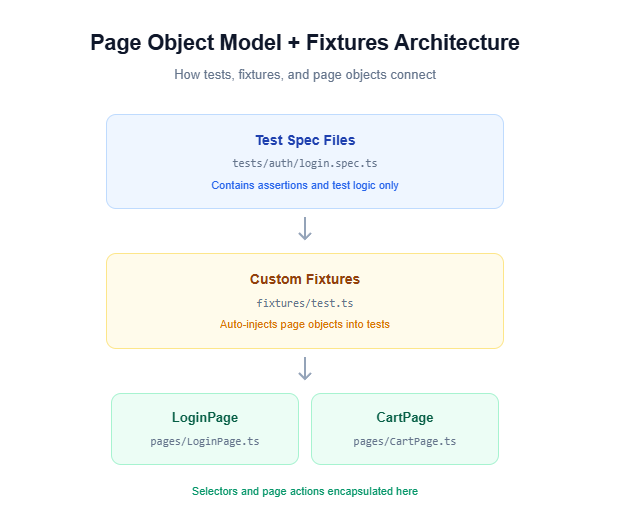

Fixtures and test hooks for scalable test organization

Once you have 10+ page objects, manually instantiating them in every test becomes boilerplate. Playwright fixtures eliminate that repetition:

import { test as base } from '@playwright/test';

import { LoginPage } from '../pages/LoginPage';

import { CartPage } from '../pages/CartPage';

type TestFixtures = {

loginPage: LoginPage;

cartPage: CartPage;

};

export const test = base.extend<TestFixtures>({

loginPage: async ({ page }, use) => {

await use(new LoginPage(page));

},

cartPage: async ({ page }, use) => {

await use(new CartPage(page));

},

});

export { expect } from '@playwright/test';

Tests then import from your fixture file instead of the base @playwright/test:

import { test, expect } from '../../fixtures/test';

test('login works via fixture', async ({ loginPage, page }) => {

await loginPage.goto();

await loginPage.login('admin@example.com', 'password123');

await expect(page).toHaveURL('/dashboard');

});

No more new LoginPage(page) in every test. The fixture handles creation and teardown automatically. This pattern is covered in depth in the reduce test maintenance guide.

Authentication handling with storageState: the pattern that saves 30 seconds per test

Every test that starts by navigating to a login page, filling credentials, and clicking "Sign in" wastes 5-10 seconds of execution time. Multiply that by 200 tests and you are burning 15+ minutes of pipeline time on repetitive login flows. Playwright's storageState feature solves this by authenticating once and reusing the session across all tests.

Setting up a shared authentication project

Create a setup file that performs the login and saves the session state:

import { test as setup, expect } from '@playwright/test';

const authFile = 'playwright/.auth/user.json';

setup('authenticate', async ({ page }) => {

await page.goto('/login');

await page.getByLabel('Email').fill('admin@example.com');

await page.getByLabel('Password').fill('password123');

await page.getByRole('button', { name: 'Sign in' }).click();

await expect(page).toHaveURL(/.*dashboard/);

// Save signed-in state to file

await page.context().storageState({ path: authFile });

});

Then wire this into your playwright.config.ts using project dependencies:

export default defineConfig({

projects: [

{ name: 'setup', testMatch: /.*\.setup\.ts/ },

{

name: 'chromium',

use: {

...devices['Desktop Chrome'],

storageState: 'playwright/.auth/user.json',

},

dependencies: ['setup'],

},

],

});

The dependencies: ['setup'] line ensures the authentication project runs first. Every subsequent test starts with a fully authenticated session, skipping the login flow entirely.

Tip: Add playwright/.auth/ to your .gitignore. The storage state file contains session cookies and should not be committed to version control.

Parallel execution: the 4 config settings that cut pipeline time in half

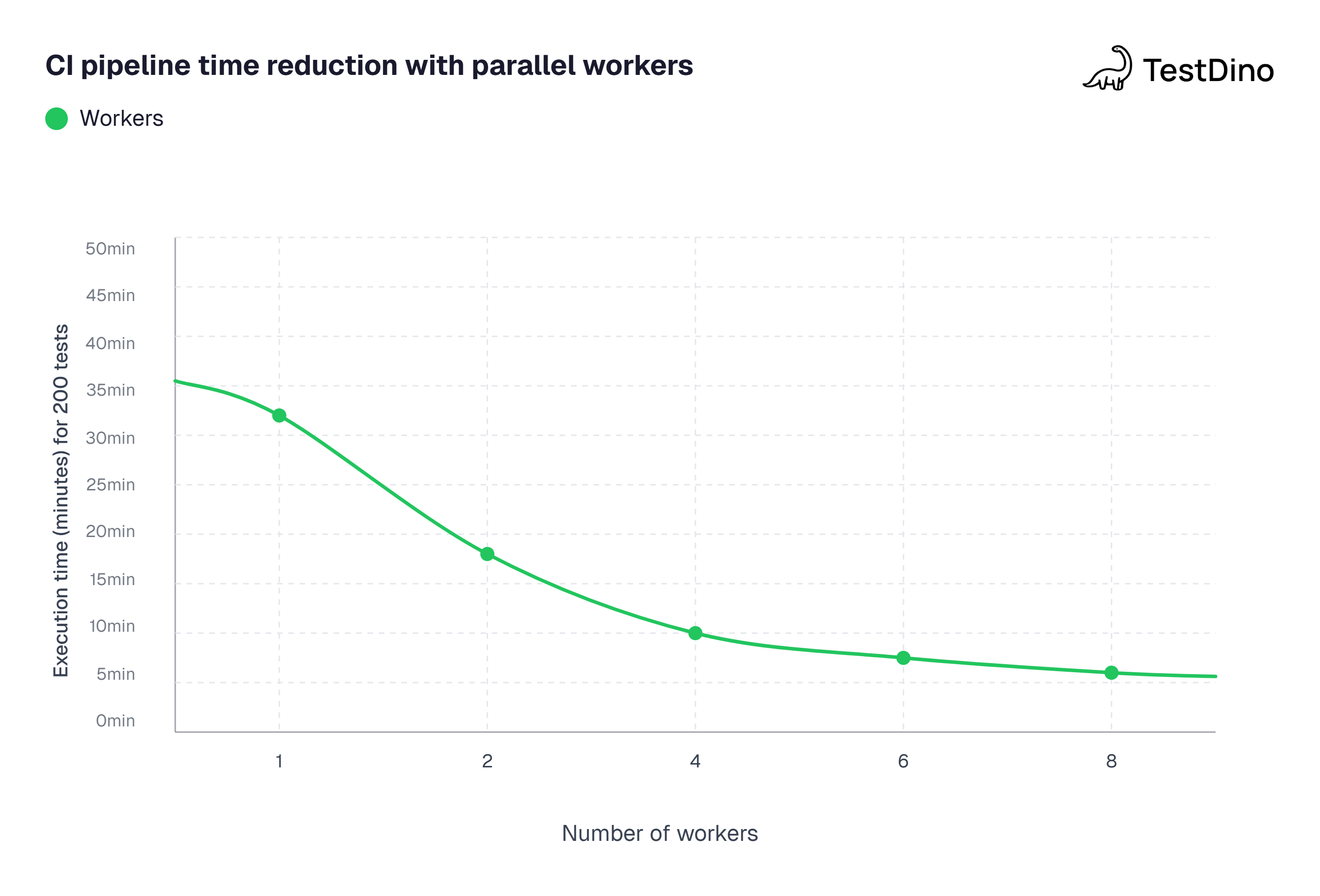

Running 200 tests sequentially on CI can take 30+ minutes. Parallel execution brings that under 15. Here are the four config settings that matter most, with advanced patterns in the Playwright parallel execution guide.

Worker configuration and sharding across CI agents

Setting 1: fullyParallel

export default defineConfig({

fullyParallel: true,

});

Without this flag, tests within the same file run sequentially. With it enabled, every test runs independently across available workers. Enable this by default and only disable it for specific serial flows like multi-step checkout sequences.

Setting 2: workers

export default defineConfig({

workers: process.env.CI ? 4 : undefined,

});

Controls how many parallel worker processes Playwright spawns. Starting with 4 workers on CI is a reasonable baseline. From there, optimize Playwright workers based on your runner's CPU cores and available memory.

Setting 3: reporter set to blob for sharding

export default defineConfig({

reporter: process.env.CI ? 'blob' : 'html',

});

When sharding across multiple CI agents, each agent produces a partial report. The blob reporter outputs a binary format that merges cleanly into a unified HTML report after all shards complete.

Setting 4: the --shard CLI flag

npx playwright test --shard=1/4

npx playwright test --shard=2/4

npx playwright test --shard=3/4

npx playwright test --shard=4/4

Each command runs one quarter of the test suite. Combined with CI matrix builds, this splits work across four parallel agents, reducing a 200-test suite from 28 minutes to under 9 minutes.

Test isolation patterns that make parallelism safe

Parallel tests fail when they share mutable state. Three patterns prevent this:

- Use baseURL + unique routes instead of hardcoded URLs

- Seed test data via API calls per test instead of relying on a shared database state

- Use test.describe.configure({ mode: 'serial' }) only when tests genuinely depend on each other (like a multi-step checkout flow where step 2 requires step 1's output)

import { test, expect } from '@playwright/test';

test.describe.configure({ mode: 'serial' });

test.describe('checkout flow', () => {

test('add item to cart', async ({ page }) => {

// step 1

});

test('proceed to payment', async ({ page }) => {

// step 2 - depends on step 1

});

});

Source: Aggregated benchmarks from Playwright GitHub Discussions and community-shared CI performance reports (2025-2026). Test suite: 200 E2E tests on GitHub Actions ubuntu-latest runners. Note: actual times vary by test complexity and runner specs.

Integrating Playwright test automation into GitHub Actions and Jenkins

Tests that only run locally do not catch regressions. CI integration is where Playwright test automation delivers its real value: catching failures before they reach production.

Full GitHub Actions workflow with artifact upload

Here is a complete workflow handling installation, test execution, sharding, and artifact collection:

name: Playwright Tests

on:

push:

branches: [main, develop]

pull_request:

branches: [main]

jobs:

test:

timeout-minutes: 30

runs-on: ubuntu-latest

strategy:

fail-fast: false

matrix:

shardIndex: [1, 2, 3, 4]

shardTotal: [4]

steps:

- uses: actions/checkout@v4

- uses: actions/setup-node@v4

with:

node-version: 20

- name: Install dependencies

run: npm ci

- name: Install Playwright browsers

run: npx playwright install --with-deps

- name: Run Playwright tests

run: npx playwright test --shard=${{ matrix.shardIndex }}/${{ matrix.shardTotal }}

- name: Upload blob report

if: always()

uses: actions/upload-artifact@v4

with:

name: blob-report-${{ matrix.shardIndex }}

path: blob-report

retention-days: 7

merge-reports:

if: always()

needs: [test]

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-node@v4

- name: Install dependencies

run: npm ci

- name: Download blob reports

uses: actions/download-artifact@v4

with:

path: all-blob-reports

pattern: blob-report-*

merge-multiple: true

- name: Merge reports

run: npx playwright merge-reports --reporter html ./all-blob-reports

- name: Upload HTML report

uses: actions/upload-artifact@v4

with:

name: playwright-report

path: playwright-report

retention-days: 14

Tip: The if: always() condition on the upload step is critical. Without it, GitHub Actions skips artifact uploads when tests fail, and you lose the debugging data you need most.

Handling headless mode, retries, and environment variables in CI

Three CI-specific settings that directly affect Playwright test automation reliability:

Headless mode: Playwright runs headless by default. On CI, always run headless. The headless vs headed comparison explains when headed mode is useful for local debugging.

Retries: Set retries: 2 on CI to catch intermittent failures while keeping pipeline time manageable. Combine with trace: 'on-first-retry' to capture traces only on second attempts, saving storage for the runs that matter.

Environment variables: Pass BASE_URL through CI secrets to avoid hardcoding staging or production URLs:

- name: Run Playwright tests

run: npx playwright test

env:

BASE_URL: ${{ secrets.STAGING_URL }}

Teams focused on long-term test health use test automation analytics dashboards to track pass rates, flakiness trends, and execution time patterns across branches and PRs.

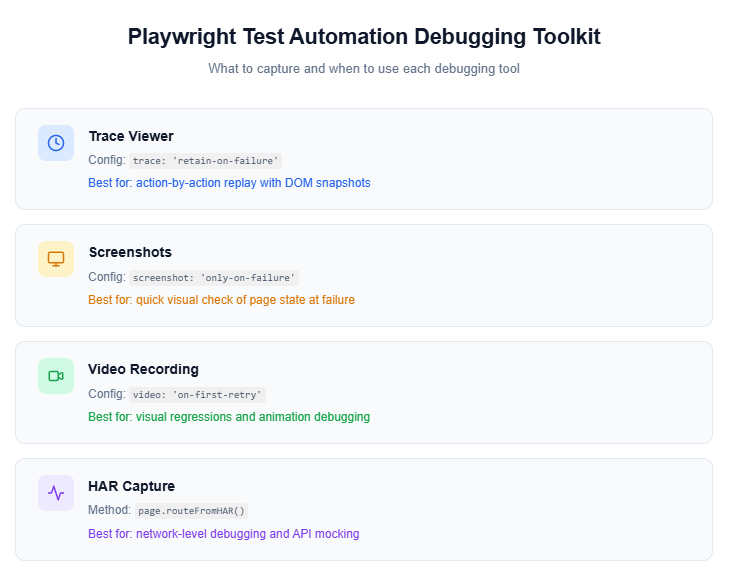

Debugging Playwright failures without guessing: trace viewer and screenshots

When a test fails in CI, the error message alone rarely tells the full story. Playwright provides three built-in debugging tools that replace guesswork with concrete evidence.

Using Playwright Trace Viewer to replay failures step by step

The Trace Viewer records every action during a test run: DOM state, network requests, console messages, and a visual filmstrip of each interaction.

Enable tracing in your config:

export default defineConfig({

use: {

trace: 'retain-on-failure',

},

});

After a test fails, open the trace locally:

npx playwright show-trace test-results/checkout-flow/trace.zip

Or drag the trace.zip file into trace.playwright.dev, which loads entirely in your browser without transmitting data to any external server.

The Trace Viewer provides four tabs for debugging:

- Actions tab: every click, fill, and navigate with the locator used and time taken

- Network tab: all HTTP requests sorted by status, duration, and content type

- Console tab: browser logs and test-level logs with source indicators

- Errors tab: the failed assertion with the exact line of test code that triggered it

Teams running Playwright test automation at scale use the Playwright observability platform from TestDino to automatically store and link trace artifacts to every CI run, making it possible to debug failures that happened days ago without re-running the pipeline.

Video recording and HAR capture for network-level debugging

For failures that require deeper investigation, enable video recording alongside traces:

export default defineConfig({

use: {

video: 'on-first-retry',

trace: 'retain-on-failure',

},

});

Videos capture a continuous browser recording during test execution. They are most useful for catching visual glitches, layout shifts, or timing-dependent UI behaviors that static DOM snapshots miss entirely.

For network-level issues, HAR (HTTP Archive) capture is built into the Trace Viewer's Network tab. You can also record HAR files explicitly and replay them in subsequent runs:

import { test } from '@playwright/test';

test('capture HAR for API debugging', async ({ page }) => {

await page.routeFromHAR('tests/fixtures/api.har', {

url: '**/api/**',

update: true,

});

await page.goto('/dashboard');

// HAR file is updated with real network data

});

Record real API responses once, then replay them in future test runs. This makes tests faster and eliminates dependencies on backend availability during test execution.

Source: State of JavaScript 2024 survey (stateofjs.com), "Testing" section, respondent usage counts.

API testing and mocking inside Playwright: what most teams overlook

Playwright goes beyond browser automation. Its page.route() API lets you intercept, mock, and modify network requests directly within your E2E tests, giving you control over backend responses without touching the actual server.

Route interception and response mocking with examples

Instead of depending on a live backend for every test run, mock specific API responses:

import { test, expect } from '@playwright/test';

test('displays products from mocked API', async ({ page }) => {

await page.route('**/api/products', async (route) => {

await route.fulfill({

status: 200,

contentType: 'application/json',

body: JSON.stringify([

{ id: 1, name: 'Laptop', price: 999 },

{ id: 2, name: 'Keyboard', price: 79 },

]),

});

});

await page.goto('/products');

await expect(page.getByText('Laptop')).toBeVisible();

await expect(page.getByText('$999')).toBeVisible();

});

Simulating error states validates that your application handles failures gracefully:

test('shows error UI when API returns 500', async ({ page }) => {

await page.route('**/api/products', (route) =>

route.fulfill({ status: 500, body: 'Internal Server Error' })

);

await page.goto('/products');

await expect(page.getByText('Something went wrong')).toBeVisible();

});

Important: Always define page.route() calls before page.goto(). Routes registered after navigation will miss the initial page load requests and your mock may never intercept the calls you intended.

Combining API setup with UI test flows for faster, lighter tests

The most effective test pattern uses API calls for data setup and browser interactions for validation:

import { test, expect } from '@playwright/test';

test('verify order appears in dashboard after API creation', async ({ page, request }) => {

// Setup: create order via API (skip the slow UI flow)

const response = await request.post('/api/orders', {

data: { product: 'Laptop', quantity: 1 },

});

const order = await response.json();

// Test: verify in UI

await page.goto('/dashboard/orders');

await expect(page.getByText(order.id)).toBeVisible();

});

This pattern keeps tests fast by skipping repetitive UI setup while still validating end-to-end rendering. It is one of the most impactful optimizations for scaling Playwright test automation suites past 100 tests.

Visual regression testing with Playwright screenshots

UI changes that break layout or styling are invisible to functional assertions. A button might still be clickable, but if it shifted 200 pixels to the right, users will notice even though your tests pass. Playwright's built-in screenshot comparison catches these regressions automatically.

Using toHaveScreenshot for pixel-level comparisons

import { test, expect } from '@playwright/test';

test('dashboard layout matches baseline', async ({ page }) => {

await page.goto('/dashboard');

await expect(page).toHaveScreenshot('dashboard.png');

});

test('product card renders correctly', async ({ page }) => {

await page.goto('/products');

const card = page.locator('[data-testid="product-card"]').first();

await expect(card).toHaveScreenshot('product-card.png', {

maxDiffPixelRatio: 0.01,

});

});

On the first run, Playwright saves the screenshot as the baseline. Subsequent runs compare against that baseline and fail if the pixel difference exceeds the threshold.

Handling dynamic content in visual tests

Dynamic elements like timestamps, avatars, or live data cause false failures. Mask them before capturing:

test('settings page visual check', async ({ page }) => {

await page.goto('/settings');

await expect(page).toHaveScreenshot('settings.png', {

mask: [

page.locator('.timestamp'),

page.locator('.user-avatar'),

],

animations: 'disabled',

});

});

Key Insight: Generate baseline screenshots in CI, not locally. Browser rendering varies by OS and hardware. Using the official Playwright Docker images in CI ensures consistent baselines across your team.

Update baselines when the UI changes intentionally by running npx playwright test --update-snapshots.

Test tagging, filtering, and organization at scale

A 200-test suite needs more than folder structure to stay manageable. Playwright's tagging and filtering features let you run subsets of tests without modifying config files, which is critical for fast CI feedback loops.

Tagging tests for smoke, regression, and feature-specific runs

Use the tag option to categorize tests:

import { test, expect } from '@playwright/test';

test('add item to cart', { tag: '@smoke' }, async ({ page }) => {

await page.goto('/products');

await page.getByRole('button', { name: 'Add to Cart' }).click();

await expect(page.getByTestId('cart-count')).toHaveText('1');

});

test('remove item from cart', { tag: ['@regression', '@cart'] }, async ({ page }) => {

// test implementation

});

Run tagged subsets from the CLI:

# Run only smoke tests

npx playwright test --grep @smoke

# Run everything except slow tests

npx playwright test --grep-invert @slow

Using test.step for structured reporting

Break complex tests into labeled steps that appear in the HTML report as a hierarchy:

test('complete purchase flow', { tag: '@e2e' }, async ({ page }) => {

await test.step('Navigate to product page', async () => {

await page.goto('/products/laptop');

});

await test.step('Add to cart and verify', async () => {

await page.getByRole('button', { name: 'Add to Cart' }).click();

await expect(page.getByTestId('cart-count')).toHaveText('1');

});

await test.step('Complete checkout', async () => {

await page.goto('/checkout');

await page.getByLabel('Card number').fill('4242424242424242');

await page.getByRole('button', { name: 'Pay' }).click();

await expect(page.getByText('Order confirmed')).toBeVisible();

});

});

When this test fails, the HTML report tells you exactly which step failed, not just which test. The Playwright annotations guide covers additional metadata options for organizing test results at scale.

Troubleshooting common Playwright test automation issues

Even well-configured Playwright projects hit recurring problems. Here are the issues QA teams encounter most frequently and the specific fixes for each:

browserType.launch: Executable doesn't exist

Playwright cannot find browser binaries. This is almost always a CI issue where binaries were not installed or versions are mismatched.

# Fix: install browsers with OS dependencies

npx playwright install --with-deps

Tests pass locally but fail on CI with TimeoutError

CI runners are slower than developer machines. Increase the default action timeout specifically for CI environments:

export default defineConfig({

use: {

actionTimeout: process.env.CI ? 15000 : 10000,

},

});

Tests interfere with each other during parallel runs

Shared mutable state is the root cause. Use Playwright's BrowserContext isolation so each test gets a fresh context with its own cookies and storage. Seed data per test via API calls instead of relying on a shared database that multiple parallel workers modify simultaneously.

Traces are not generated for failed tests

Verify your trace config is set to 'retain-on-failure' or 'on-first-retry'. On CI, ensure your workflow includes if: always() on the artifact upload step. Without it, failed test artifacts never get uploaded and traces are silently discarded.

Key takeaways

Here is a summary of the decisions that determine whether your Playwright test automation scales smoothly or creates more maintenance than it saves:

-

Start with production-ready config: Set

fullyParallel,forbidOnly,retries, andtracefrom day one. Retrofitting these later is harder than setting them up front. -

Use role-based locators first: They are the most resilient to UI changes and align with accessibility best practices.

-

Never use

page.waitForTimeout(): Replace every hard sleep with web-first assertions or explicitwaitFor()conditions. -

Adopt POM + fixtures early: The upfront investment in page objects and custom fixtures pays back exponentially once your suite grows past 50 tests.

-

Authenticate once with

storageState: Save 5-10 seconds per test by reusing login sessions instead of repeating the UI login flow. -

Shard on CI: Four shards across a GitHub Actions matrix can cut pipeline time by 60-70%.

-

Capture traces on failure:

retain-on-failuregives you full debugging context without the storage overhead of tracing every test. -

Mock APIs for speed and isolation: Use

page.route()to decouple your E2E tests from backend availability. -

Add visual regression checks:

toHaveScreenshot()catches layout and styling regressions that functional assertions miss entirely. -

Track flakiness trends: Integrate with a test automation analytics dashboard to catch regressions before they compound into systemic instability.

FAQs

Table of content

Flaky tests killing your velocity?

TestDino auto-detects flakiness, categorizes root causes, tracks patterns over time.